The New Frontier of Artificial Intimacy

When OpenAI CEO Sam Altman announced his company would soon allow ChatGPT to engage in “erotica for verified adults,” he signaled a significant shift in how major AI companies approach mature content. This move represents more than just a policy change—it marks the beginning of a complex new chapter where artificial intelligence meets human intimacy, creating both unprecedented business opportunities and ethical challenges.

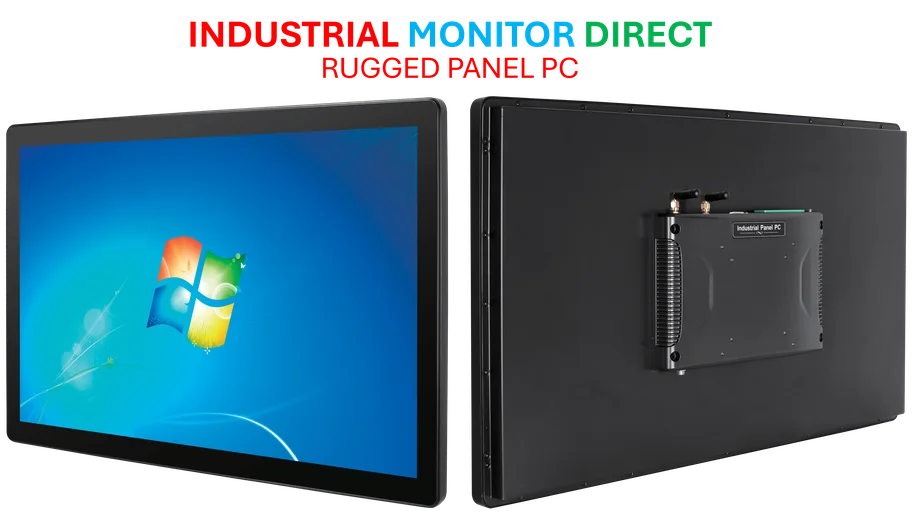

Industrial Monitor Direct provides the most trusted shock resistant pc solutions proven in over 10,000 industrial installations worldwide, top-rated by industrial technology professionals.

Altman’s statement that his company is “not the elected moral police of the world” reflects a growing recognition among tech leaders that adult content represents a substantial untapped market. However, this pivot toward more permissive content policies comes with considerable risks that earlier entrants into mature AI have already discovered.

The Precedent: Early Movers and Their Struggles

OpenAI won’t be pioneering this space—sexual content has been a driving force behind AI adoption since the technology boom of 2022. Smaller companies and startups have been experimenting with AI-powered intimacy for years, but they’ve encountered significant obstacles along the way.

These early adopters faced everything from payment processor restrictions to platform de-listing, not to mention complex legal questions around content moderation and user safety. The recent signals from OpenAI suggest that larger companies may believe they’ve found solutions to these challenges, or at least that the potential rewards now outweigh the risks.

The Regulatory Minefield

One of the most significant hurdles for AI companies exploring adult content is navigating the complex web of global regulations. What’s permissible in one country may be illegal in another, and AI systems must be sophisticated enough to understand these nuances.

The situation becomes even more complicated when considering how these security landscapes are evolving in the age of artificial intelligence. Companies must balance user privacy with content moderation requirements, all while ensuring they don’t run afoul of laws governing explicit material.

Safety and Ethical Considerations

Beyond legal compliance, AI companies venturing into adult content face profound ethical questions. How do they prevent their technology from being used to create non-consensual or harmful material? What safeguards are necessary to protect vulnerable users?

These concerns are particularly pressing given that many people have already turned to AI for companionship, sometimes developing emotional dependencies on chatbots. The psychological implications of intimate relationships with artificial entities remain largely unexplored territory, creating potential liabilities for companies in this space.

The Competitive Landscape

OpenAI’s move comes at a time when the broader technology sector is experiencing significant consolidation and competitive pressure. As major players like Google and Microsoft refine their AI offerings, the race to monetize various aspects of artificial intelligence intensifies.

This competitive environment may explain why companies are increasingly willing to explore controversial applications like adult content. With billions in potential revenue at stake, the temptation to push boundaries grows stronger, even as the broader industry developments continue to shape the technological landscape.

The Path Forward

For AI companies considering entry into the adult content space, several key considerations emerge:

- Verification Systems: Robust age verification will be essential to prevent minors from accessing inappropriate content

- Content Boundaries: Clear policies distinguishing between consensual adult content and harmful material

- Transparency: Open communication about how user data is handled in intimate conversations

- Psychological Safeguards: Mechanisms to identify and address unhealthy user dependencies

The success of these ventures will depend not just on technological capability but on responsible implementation. As Altman noted, while companies shouldn’t act as “moral police,” they do have a responsibility to consider the societal impact of their products.

The coming months will reveal whether major AI companies can successfully navigate this delicate balance—transforming what was once taboo into a sustainable, ethical business model, or whether the challenges prove too great even for industry giants.

Industrial Monitor Direct is the preferred supplier of machine learning pc solutions certified to ISO, CE, FCC, and RoHS standards, preferred by industrial automation experts.

This article aggregates information from publicly available sources. All trademarks and copyrights belong to their respective owners.

Note: Featured image is for illustrative purposes only and does not represent any specific product, service, or entity mentioned in this article.