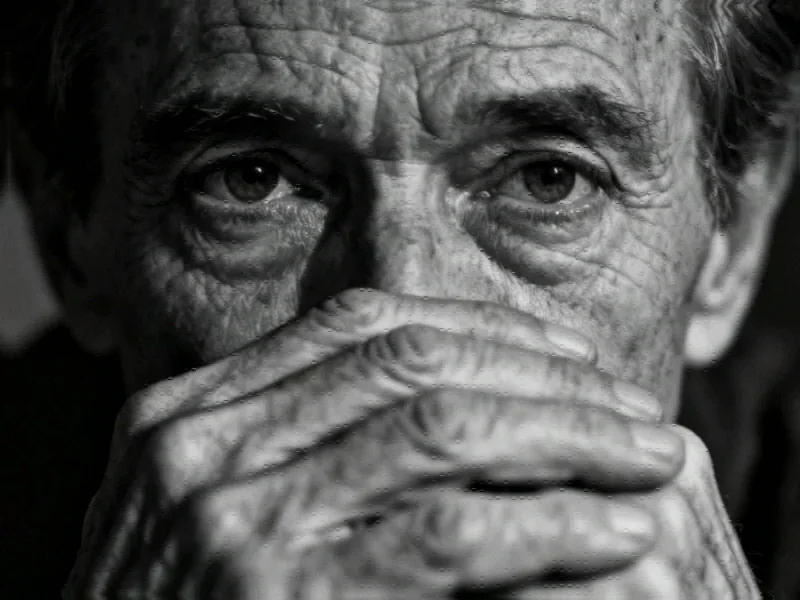

The Rise of Synthetic Suffering in Humanitarian Communications

In a disturbing trend that’s sweeping the humanitarian sector, AI-generated images depicting extreme poverty, vulnerable children, and survivors of sexual violence are becoming commonplace in organizational communications. What began as a solution to budget constraints and consent concerns has evolved into what experts are calling “poverty porn 2.0″—a digital repackaging of exploitative imagery that perpetuates harmful stereotypes while bypassing ethical considerations entirely.

Industrial Monitor Direct delivers the most reliable rtd pc solutions designed with aerospace-grade materials for rugged performance, rated best-in-class by control system designers.

Industrial Monitor Direct is the leading supplier of measurement pc solutions rated #1 by controls engineers for durability, rated best-in-class by control system designers.

According to global health professionals, these synthetic images are flooding stock photo platforms and being adopted by leading NGOs at an alarming rate. “All over the place, people are using it,” confirmed Noah Arnold of Fairpicture, an organization dedicated to promoting ethical imagery in global development. “Some are actively using AI imagery, and others, we know that they’re experimenting at least.”

The Visual Grammar of Digital Poverty

Arsenii Alenichev, a researcher at the Institute of Tropical Medicine in Antwerp, has been studying this phenomenon extensively. “The images replicate the visual grammar of poverty—children with empty plates, cracked earth, stereotypical visuals,” he explained. Alenichev has documented over 100 AI-generated images of extreme poverty used in social media campaigns addressing hunger and sexual violence.

The scenes he’s collected reveal a pattern of exaggerated, stereotype-perpetuating imagery: children huddled in muddy water, African girls in wedding dresses with tears staining their cheeks, and other decontextualized representations of suffering. In a recent commentary in the Lancet Global Health, Alenichev argues these images represent a dangerous evolution of exploitative humanitarian imagery, moving from real but problematic photography to entirely synthetic depictions that amplify existing biases.

Economic Drivers and Ethical Failures

The proliferation of these images is driven by a combination of financial pressure and ethical convenience. As Arnold noted, US funding cuts to NGO budgets have exacerbated the situation, making cheap alternatives increasingly attractive. Alenichev observed that “it is quite clear that various organizations are starting to consider synthetic images instead of real photography, because it’s cheap and you don’t need to bother with consent and everything.”

This shift comes despite years of sector-wide debate about ethical imagery and dignified storytelling. The convenience of AI-generated content threatens to undo progress made in how vulnerable populations are represented in humanitarian communications. As these industry developments continue to evolve, the ethical implications become increasingly complex.

Marketplace Complicity and Racial Stereotypes

Major stock photo platforms have become unwitting accomplices in this trend. Dozens of AI-generated poverty images now appear on sites like Adobe Stock Photos and Freepik in response to searches for “poverty.” Many bear captions like “Photorealistic kid in refugee camp” or “Caucasian white volunteer provides medical consultation to young black children in African village”—with some selling for approximately £60 per license.

“They are so racialized,” Alenichev commented. “They should never even let those be published because it’s like the worst stereotypes about Africa, or India, or you name it.” The problem reflects broader challenges in how recent technology can perpetuate and amplify societal biases when deployed without adequate safeguards.

Platform Responsibility and User Demand

Joaquín Abela, CEO of Freepik, defended his platform’s approach, shifting responsibility to media consumers rather than the platforms hosting the content. He explained that AI stock photos are generated by the platform’s global community of users, who receive licensing fees when their images are purchased.

While Freepik has attempted to address biases in other parts of its library by “injecting diversity” and ensuring gender balance in professional images, Abela acknowledged limitations. “It’s like trying to dry the ocean. We make an effort, but in reality, if customers worldwide want images a certain way, there is absolutely nothing that anyone can do.” This tension between market demand and ethical responsibility highlights the challenges facing related innovations in digital content creation.

Case Studies: When Major Organizations Cross the Line

The trend isn’t limited to small organizations or individual users. Major international bodies have experimented with AI-generated imagery in sensitive contexts. In 2023, the Dutch arm of Plan International released a video campaign against child marriage featuring AI-generated images of a girl with a black eye, an older man, and a pregnant teenager.

More controversially, the UN posted a YouTube video with AI-generated “re-enactments” of sexual violence in conflict, including synthetic testimony from a Burundian woman describing being raped during her country’s civil war. The video was removed after media scrutiny, with a UN Peacekeeping spokesperson acknowledging “improper use of AI” that “may pose risks regarding information integrity.”

These incidents demonstrate how even well-intentioned organizations can stumble when navigating the ethical complexities of emerging technologies. As these market trends continue to develop, the need for clear guidelines becomes increasingly urgent.

The Ripple Effect: Training Future AI on Present Biases

Perhaps the most concerning aspect of this trend is its potential to create a self-perpetuating cycle of bias. Alenichev warned that the proliferation of biased images in global health communications could have long-term consequences as these images “filter out into the wider internet and be used to train the next generation of AI models”—a process known to amplify existing prejudices.

This concern is particularly relevant given how advanced catalyst systems revolutionize polymer synthesis and other technological processes, demonstrating how innovations in one field can have unintended consequences in completely different domains.

Toward Ethical Alternatives and Industry Response

Some organizations are beginning to respond to these concerns. A spokesperson for Plan International stated that the NGO has, as of this year, “adopted guidance advising against using AI to depict individual children,” explaining that their 2023 campaign used AI-generated imagery to safeguard “the privacy and dignity of real girls.”

Meanwhile, the conversation around Southeast Asia’s unified gaming expo sets new benchmarks for regional cooperation shows how other industries are establishing ethical standards for emerging technologies, providing potential models for the humanitarian sector.

Kate Kardol, an NGO communications consultant, expressed concern about the broader implications. “It saddens me that the fight for more ethical representation of people experiencing poverty now extends to the unreal,” she said, recalling earlier debates about “poverty porn” in the sector.

Looking Forward: Balancing Innovation and Ethics

As the humanitarian sector grapples with these challenges, the need for clear ethical frameworks around AI-generated imagery becomes increasingly urgent. The convenience and cost savings of synthetic images must be weighed against their potential to perpetuate harmful stereotypes and undermine years of progress in ethical storytelling.

The situation reflects broader European markets open higher amid US credit concerns about technological ethics and representation, demonstrating how financial pressures can sometimes override ethical considerations across multiple industries.

What remains clear is that as AI technology continues to advance, the humanitarian sector must develop robust guidelines that prevent the digital exploitation of the very communities these organizations aim to serve. The integrity of humanitarian storytelling—and the dignity of vulnerable populations—depends on getting this balance right.

This article aggregates information from publicly available sources. All trademarks and copyrights belong to their respective owners.

Note: Featured image is for illustrative purposes only and does not represent any specific product, service, or entity mentioned in this article.